In IoT (Internet of Things), we talk a lot about the Cloud. When we talk about the Cloud, we actually mean all the data stored on remote servers accessible via the web in datacenters. The term cloud is very present; we stock and process the data of all our connected objects on it. I visited safe host to understand how a datacenter center works.

This is where data centers come in. They are where the servers that store our data are physically located. Without necessarily knowing it, we use data from the world’s largest data centers, such as those of Google, Amazon, and UPS to name but a few, every day.

The global public data center market is currently worth over $ 25 billion with a strong upward trend in the coming years. There are over 3 300 data centers worldwide, over 130 of which are in France, which is the equivalent of about 100 000 servers in our beautiful country.

Why store your data on a server in a datacenter?

Data centers host physical infrastructure. In other words, they provide a storage environment for servers, which includes space, power, cooling, security, and connectivity. They do not have access to the data on the servers.

There are several advantages to storing data on these remote servers in these data centers, but this choice depends on the characteristics of the data to be stored (volume, criticality, etc.).

First and foremost, and it is their business to be so, they are real fortresses. Your data is both quickly and easily accessible for you but completely secure. Achieving these same performances on a private server is both complex and expensive to set up and maintain; so, not every business can afford to do this.

The other advantage of data centers is that when you have data to store, using a data center can make life easier. Most of the time (and in the majority of data centers), the customer manages its IT infrastructure (very often, data center employees do not even have access to the customer’s room), but Safe Host offers a hardware maintenance service (installation of machines, cabling, labeling, etc.).

The only choice to be made is whether to delegate the security of your data to another entity. This decision depends on the company’s means and the criticality of the data to be stored.

I recently had the opportunity to visit a brand-new data center in Switzerland, Safe Host. The tour was very informative, and this article reviews what I learned.

How a datacenter works? My tour and impressions

Arriving on-site, I found myself in front of a huge block of concrete without windows. This was the data center: imposing and uniform in color, this huge building intimidated me at first. Let’s start from the beginning; what is a data center? A data center is simply a more or less large building containing storage services to host a certain volume of data. They are highly secure, in terms of fire, geolocation, flooding, and IS and physical security, as they often store a lot of data from potentially sensitive companies. We will look at how they work from the inside later on.

The company that owns this datacenter is called Safe Host

Safe Host is a start-up that was created in the 2000s; it currently has three data centers in Switzerland. The data center we visited in Gland is the most recent and largest in Switzerland!

The data center has a surface area of 14 000 m2 over four floors filled with computer servers. The building’s entire technical infrastructure (electricity, connectivity, cooling) is located in the basement (generators, batteries, inverters, air conditioning units, etc.).

Security is of course very important for this type of building. The windowless walls are more than 20 meters thick in the center of the building.

SUMMARY OF MY TOUR AT SAFE HOST DATACENTER

After an identity check at the entrance, we swapped our identity cards for an access badge.

We went through an entrance gate and then a highly secure airlock with a fingerprint sensor. We came out in front of a huge elevator to take us down to the basement.

Long white corridors still under construction branched off from all sides and a huge Caterpillar generator was behind one door. With the power of two Bugatti Chirons running at full power, this generator is used to supply the building in case of a mains power failure.

Long white corridors still under construction branched off from all sides and a huge Caterpillar generator was behind one door. With the power of two Bugatti Chirons running at full power, this generator is used to supply the building in case of a mains power failure.

This is when I started hearing the word redundancy which became the watchword for the tour. The principle: all systems are doubled in case of a technical problem. The data center’s priority is to ensure continuity of service, i.e. guarantee, 24/7, the non-stop operation of the systems allowing the servers to operate optimally (electricity, cooling, connectivity, security).

Our tour continued with the different inverters which recover the high voltage delivered directly by a Swiss company and convert it into stable lower voltage DC. If there is a power cut, the generators take 15 seconds to kick in and start supplying the site. These batteries take over for those 15 seconds before the generator starts up.

Interestingly, the inverters are smart and manage their own batteries by testing them regularly and charging and discharging cycles.

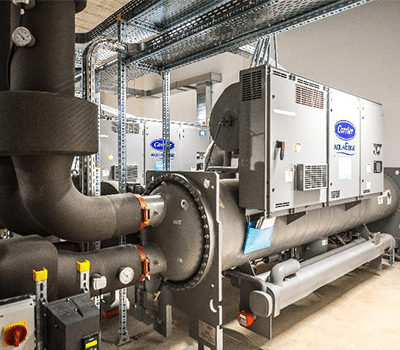

We change area to talk about the other critical element of data centers: air conditioning and cooling. We still have not seen or heard anything about servers. This is perfectly normal as we are in the “submerged part of the iceberg” at the heart of the infrastructure on which the site depends to operate. The building needs to be properly air conditioned because the servers will fail if they overheat. This is why air conditioning and cooling are critical for data center stability.

We opened the huge door and came face to face with some huge tanks. Everything is out of scale here. The tanks are 6 meters high, and the pumps are gigantic. All this equipment supplies the air conditioners on the platforms upstairs where the servers are stored in their racks.

What I struck me the most here was the noise of the pumps that activate according to the outdoor temperature; the network is smart and tries to save the maximum of resources. After a little more than an hour and a half in the building, we finally arrive at the third floor in front of several server rooms, called IT rooms.

These shared rooms where you reserve your plot have an ideal environment for servers thanks to the various systems we were shown earlier in the basement. These rooms are equipped with air conditioners. They are the cornerstones of all data centers. The chilled air arrives in a corridor, waiting to be sucked in by the servers, and then comes out on the other side hot.

These shared rooms where you reserve your plot have an ideal environment for servers thanks to the various systems we were shown earlier in the basement. These rooms are equipped with air conditioners. They are the cornerstones of all data centers. The chilled air arrives in a corridor, waiting to be sucked in by the servers, and then comes out on the other side hot.

We ended the tour with the roof. This is where the heat is discharged at the end of the previous stage. The water also arrives up there to be purified by various systems following a slight inclination on the roof. It is then sent down to repeat its cooling cycle. The water is in a closed circuit here and can only be lost through evaporation.

Here too redundancy is planned:

Lake Geneva is nearby, so they can draw water directly from it, if necessary.

They can also use the water from a lake belonging to a neighboring farmer, if need be.

Rainwater is also collected on the roof and stored in tanks, just in case. The technical part of our tour ended here. It was question time for me now.

Directions for the data center of the future: will a 100% green datacenter see the day?

In addition to learning a lot about an environment that I knew nothing about, this tour around the data center left me with new questions, and especially one big one:

How can the ecological impact of these data centers be limited? When will a 100 % green data center see the day? Is it even possible? Interesting initiatives in this field are being developed and I saw the effort made in this direction at Safe Host, which changed my original view on this issue.

Safe Host is one of the most recent data centers in Europe, and they integrated environmental considerations into their design. I saw several examples of this during the tour, but I did not mention it earlier.

- Regulation of site cooling power consumption by avoiding unnecessary cooling of a room that is already at the right temperature.

- Recycling the heat generated by the servers to heat the various offices and homes nearby in the longer-term (heat redistribution network).

- Solar panels on the roof to produce electricity used by the office building.

There are also interesting developments in server design and storage in this direction, such as Immersion4 which proposes a completely innovative way of storing servers. By plunging them into a secret-composition oil bath, they promise to dramatically reduce data center power consumption while radically improving computing power.

The challenge is probably to ensure that this trend and effort develops enough for big data centers to take a step beyond greenwashing. A real transition will be needed for these solutions to remain viable.

We can also consider the responsibility of the user on what is going on at the other end of the chain and the relevance of reducing the volume of data to store. Does the data center model need to shift towards personal clusters which are more secure and ecological?

Edge IoT seems a good subject to follow, and it could eventually propose solutions for the future.